| Title | : | Stanford Seminar - Information Theory of Deep Learning, Naftali Tishby |

| Lasting | : | 1.24.44 |

| Date of publication | : | |

| Views | : | 77 rb |

|

|

This theory looks correct! brbrWhen neural networks became popular, everybody in the scientific computation community eagerly wanted to describe it in their own languages Many had achieved limited success I think the information theory one makes the most sense, because it finds simplicity of the information from complexity of data It is like how human thinks We create abstract symbols that captures essence of the nature and conduct logical reasoning, which means that the dimension of freedom behind the world should be small since it is structured brbrWhy did the ML community and industry not adopt this explanation? Comment from : Hanchi Sun |

|

|

RIP Naftali! Comment from : Paritosh Kulkarni |

|

|

44:25 Comment from : Minh Toàn Nguyễn |

|

|

Anybody know what a “pattern” is in information theory? Comment from : Paul Curry |

|

|

such a loss… blessed be his memory Comment from : Alex Cohn |

|

|

23:04 Comment from : Devanand T ദേവാനന്ദ് |

|

|

Amazing talk, thank you! Comment from : Nicky button |

|

|

oooo! so it is SGD ? If I wouldn't listen to the Q&A session I wouldn't understand it all Now I do Well, with second order algorithms (like Levenberg Marquard) you won't need all these balls floating to understand what's going on with your neurons Gradient Descent is poor's man gold Comment from : Absolute Zero |

|

|

I read another paper ON THE INFORMATION BOTTLENECK THEORY OF DEEP LEARNING by Harvard's researchers published in 2018, and they hold a very different view Seems it's still unclear how neural network works Comment from : Alex Kai |

|

|

1:22:31 - thesis statement about how to choose mini batch size Comment from : phaZZi |

|

|

This is my personal summary:br00:00:00 History of Deep Learningbrbr00:07:30 "Ingredients" of the Talkbr00:12:30 DNN and Information Theorybr00:19:00 Information Plane Theorembr00:23:00 First Information Plane Visualizationbrbr00:29:00 Mention of Critics of the Methodbr00:32:00 Rethinking Learning Theorybr00:37:00 "Instead of Quantizing the Hypothesis Class, let's Quantize the Input!"br00:43:00 The Information Bottleneckbr00:47:30 Second Information Plane Visualizationbr00:50:00 Graphs for Mean and Variance of the Gradientbr00:55:00 Second Mention of Critics of the Methodbr01:00:00 The Benefit of Hidden Layersbr01:05:00 Separation of Labels by Layers (Visualization)br01:09:00 Summary of the Talkbr01:12:30 Question about Optimization and Mutual Informationbr01:16:30 Question about Information Plane Theorembr01:19:30 Question about Number of Hidden Layersbr01:22:00 Question about Mini-Batches Comment from : krasserkalle |

|

|

Can he use deep learning to fix the audio problems of this video? Comment from : Dr_Amir |

|

|

When was this talk given? Has he published his paper yet? I found nothing online so far, but maybe I just didn't see it Comment from : Julian Büchel |

|

|

Aah this is so relaxing Thank you! Comment from : applecom1de |

|

|

"Learn to ignore irrelevant labels" yes intriguing Comment from : Zessa Z Zenessa |

|

|

I wonder if based on this we can create better training algorithms Like for example effectiveness of dropout may have a connection to this theory The dropout may introduce more randomness in "diffusion" stage of training Comment from : FlyingOctopus0 |

|

|

If the theories are true, maybe we can compute the weights directly without iteratively learning them via gradient decsent Comment from : Binyu Wang |

|

Stanford Seminar - Straddling a Medical Device IoT Startup Between Taiwan and the United States РѕС‚ : Stanford Online Download Full Episodes | The Most Watched videos of all time |

|

Stanford Seminar - New Trends in Social Entrepreneurship in India РѕС‚ : Stanford Online Download Full Episodes | The Most Watched videos of all time |

|

Tolman sign gestalt learning theory | Tolman sign learning theory in hindi | Latent learning theory РѕС‚ : TETology Download Full Episodes | The Most Watched videos of all time |

|

Social learning theory| Social learning theory in urdu|Social learning theory albert bandura РѕС‚ : Psycho Informative Download Full Episodes | The Most Watched videos of all time |

|

Dope Shope ( LYRICS ) | Yo Yo Honey Singh | Deep Money | International Villager | Deep Lyrics РѕС‚ : Deep Lyrics Download Full Episodes | The Most Watched videos of all time |

|

Sheri Sheppard of Stanford is U.S. Professor of the Year РѕС‚ : Stanford University School of Engineering Download Full Episodes | The Most Watched videos of all time |

|

Statistics for Data Science | Probability and Statistics | Statistics Tutorial | Ph.D. (Stanford) РѕС‚ : Great Learning Download Full Episodes | The Most Watched videos of all time |

|

Quality Assurance in E Learning Source (E Learning Capacity Building Seminar Series) РѕС‚ : EL COP Download Full Episodes | The Most Watched videos of all time |

|

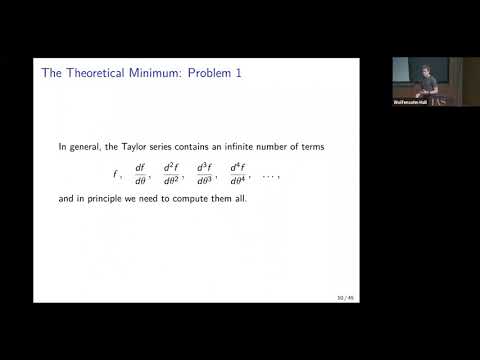

The Principles of Deep Learning Theory - Dan Roberts РѕС‚ : Institute for Advanced Study Download Full Episodes | The Most Watched videos of all time |

|

Deep Learning Theory Session. Ilya SutskeverIlya Sutskever РѕС‚ : TAUVOD Download Full Episodes | The Most Watched videos of all time |