| Title | : | Neural networks [8.1] : Sparse coding - definition |

| Lasting | : | 12.05 |

| Date of publication | : | |

| Views | : | 46 rb |

|

|

The illustration of the image characters, where to find the paper from your colleague? Comment from : Charlotte Chuang |

|

|

Thanks, very clear explanation! Comment from : GABRIEL RAMÍREZ ORIHUELA |

|

|

What happens to the neurons that are not firing the sparse coding ? Comment from : Sindhya Bishwakarma |

|

|

sir if you knew blog discussion about it please mention it Comment from : Yahya Khan |

|

|

God bless you for your great contribution in sparse coding, sir i need your help in that how can sparse LSTM work, i did not found implementation coding on any blog on it, i will be very thankful,sir i need your explanation Comment from : Yahya Khan |

|

|

awsm!!! Comment from : Naman Chauhan |

|

|

Brilliantly explained I will be citing this video in one of the blogs i am writing :) Comment from : Akash PB |

|

|

thank you very much! Helped me a lot! :) Comment from : palmino121 |

|

|

5:40 good thanks :) Comment from : Alexis B |

|

|

I don't understand why you state L1 norm as a regularization Every other source dealing with sparsity stated L0 as a regularization L1 would for example prioritize solutions such as [01, 01, 01, 01] instead of for example [1,0,0,1] which is clearly sparser Isn't that correct? Comment from : SpacelessSpace |

|

|

@3:48 "however the L1 penalty here wouldn't be happy, it would be high" Thanks for making the distinction Comment from : Kiuhnm Mnhuik |

|

|

thanks Hugo, I am confused about the structure of this unsupervised neural networkbrx is input layer, h is hidden layer, x_hat is the output layer (like autoencoder) am I right? And where is the Dictionary Matrix (D)brOr it is no structured by the propagation neural layer?brThank you again Comment from : HG L |

|

|

Thank you so much Prof Larochelle for explaining so well Comment from : snigdha purohit |

|

|

Thanks for defining sparse coding! But what is the motivation behind obtaining sparse sources? vs having dense sources like via PCA? Comment from : Tam Tran |

|

|

Is there a difference between sparse coding and sparse representation? Comment from : Shiori Watanabe |

|

|

Hi Hugo- I have a black and white image of tracked frisbee and i want to train my network to identify that how can i do that Comment from : ankit kumar |

|

|

Hi Hugo -- if you are reading this comment, can you tell me why you categorize sparse coding as a neural network? It doesn't seems like a graphical model Is there a historical reason? Comment from : hNeg |

|

|

Good explanation! :-) I'm new to neural network Is sparse coding a part of sparse autoencoder? Comment from : Korkez Korr |

|

|

slides? Comment from : Piruthvi Chendur Palanisamy |

|

|

hello friend thanks for your videos are very usefull can you a video explaining RICA and ICA encoders? this help me a lot thanks Comment from : vito135c |

|

|

usefulthx Comment from : Burned_Box |

|

|

very useful!brthx Comment from : Enoch Sit |

![Neural networks [8.5] : Sparse coding - dictionary learning algorithm](https://i.ytimg.com/vi/PzNMff7cYjM/hqdefault.jpg) |

Neural networks [8.5] : Sparse coding - dictionary learning algorithm РѕС‚ : Hugo Larochelle Download Full Episodes | The Most Watched videos of all time |

![Neural networks [8.6] : Sparse coding - online dictionary learning algorithm](https://i.ytimg.com/vi/IePxTepLvQc/hqdefault.jpg) |

Neural networks [8.6] : Sparse coding - online dictionary learning algorithm РѕС‚ : Hugo Larochelle Download Full Episodes | The Most Watched videos of all time |

|

Coding for Kids |What is coding for kids? | Coding for beginners | Types of Coding |Coding Languages РѕС‚ : LearningMole Download Full Episodes | The Most Watched videos of all time |

|

Simple, Efficient and Neural Algorithms for Sparse Coding РѕС‚ : Simons Institute Download Full Episodes | The Most Watched videos of all time |

|

Feature learning with matrix factorization and neural networks РѕС‚ : Aaron Richter Download Full Episodes | The Most Watched videos of all time |

|

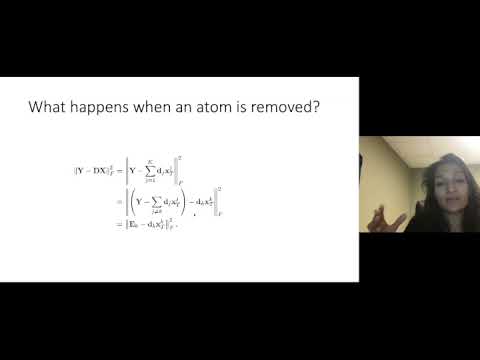

Sparse Coding and Dictionary Learning | Unsupervised Learning for Big Data РѕС‚ : Krishnaswamy Lab Download Full Episodes | The Most Watched videos of all time |

|

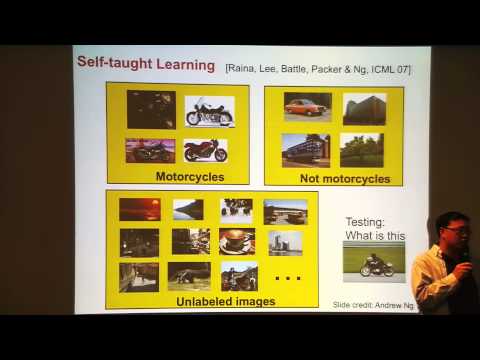

Kai Yu: "Image Classification Using Sparse Coding, Pt. 1" РѕС‚ : Institute for Pure u0026 Applied Mathematics (IPAM Download Full Episodes | The Most Watched videos of all time |

|

07L – PCA, AE, K-means, Gaussian mixture model, sparse coding, and intuitive VAE РѕС‚ : Alfredo Canziani Download Full Episodes | The Most Watched videos of all time |

|

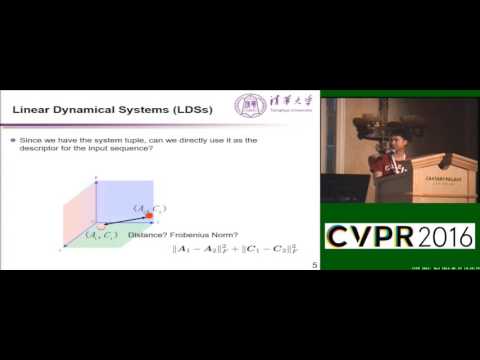

Sparse Coding and Dictionary Learning With Linear Dynamical Systems РѕС‚ : ComputerVisionFoundation Videos Download Full Episodes | The Most Watched videos of all time |

|

How to Start Coding | Programming for Beginners | Learn Coding | Intellipaat РѕС‚ : Intellipaat Download Full Episodes | The Most Watched videos of all time |